Introduction

Artificial intelligence is changing how digital audio is created. Businesses, educators, developers, and media creators increasingly use automated voice systems instead of traditional recording setups. AI speech tools now aim to replicate natural tone, pacing, and emotional expression with greater accuracy than earlier text-to-speech systems.

ElevenLabs is a technology company focused on advanced speech synthesis and voice modeling. Its platform provides tools for generating realistic audio from text, cloning voices, and integrating voice features into applications. This article presents a structured, independent overview of the platform, its functionality, strengths, limitations, and suitable use cases.

What Is ElevenLabs?

ElevenLabs is an AI software company that develops deep learning–based voice generation technology. The platform converts written content into spoken audio and allows users to build custom digital voice profiles.

The system is designed for both non-technical users and developers. Content creators can use the web interface to generate narration, while technical teams can connect to the platform through APIs for automated voice workflows.

Its primary focus is realism. Instead of producing flat or robotic speech, the system attempts to capture natural variation in tone and delivery.

How the Technology Works

ElevenLabs uses neural network models trained on diverse speech datasets. These models learn pronunciation patterns, intonation shifts, and contextual emphasis. When text is entered, the system analyzes sentence structure and generates corresponding vocal output.

The general process includes:

- Inputting text into the platform

- Selecting a prebuilt or custom voice model

- Adjusting parameters such as stability and similarity

- Generating and exporting audio files

For voice cloning, users upload sample recordings. The system analyzes vocal characteristics and builds a reusable voice model capable of speaking new content in a similar tone and style.

Developers can access these capabilities through programmable interfaces, enabling integration into apps, websites, or enterprise systems.

Main Features

Advanced Text-to-Speech

The core function transforms written scripts into spoken audio. The output aims to reflect natural pacing and emotional inflection rather than monotone delivery.

Custom Voice Cloning

Users can create digital voice replicas from audio samples. This allows consistent narration across multiple projects or branded content streams.

Multilingual Capabilities

The platform supports multiple languages and accents, which helps organizations localize content without recording separate voice actors for each region.

Adjustable Voice Parameters

Controls such as clarity and stability influence how expressive or consistent the generated voice sounds. These settings help fine-tune output quality.

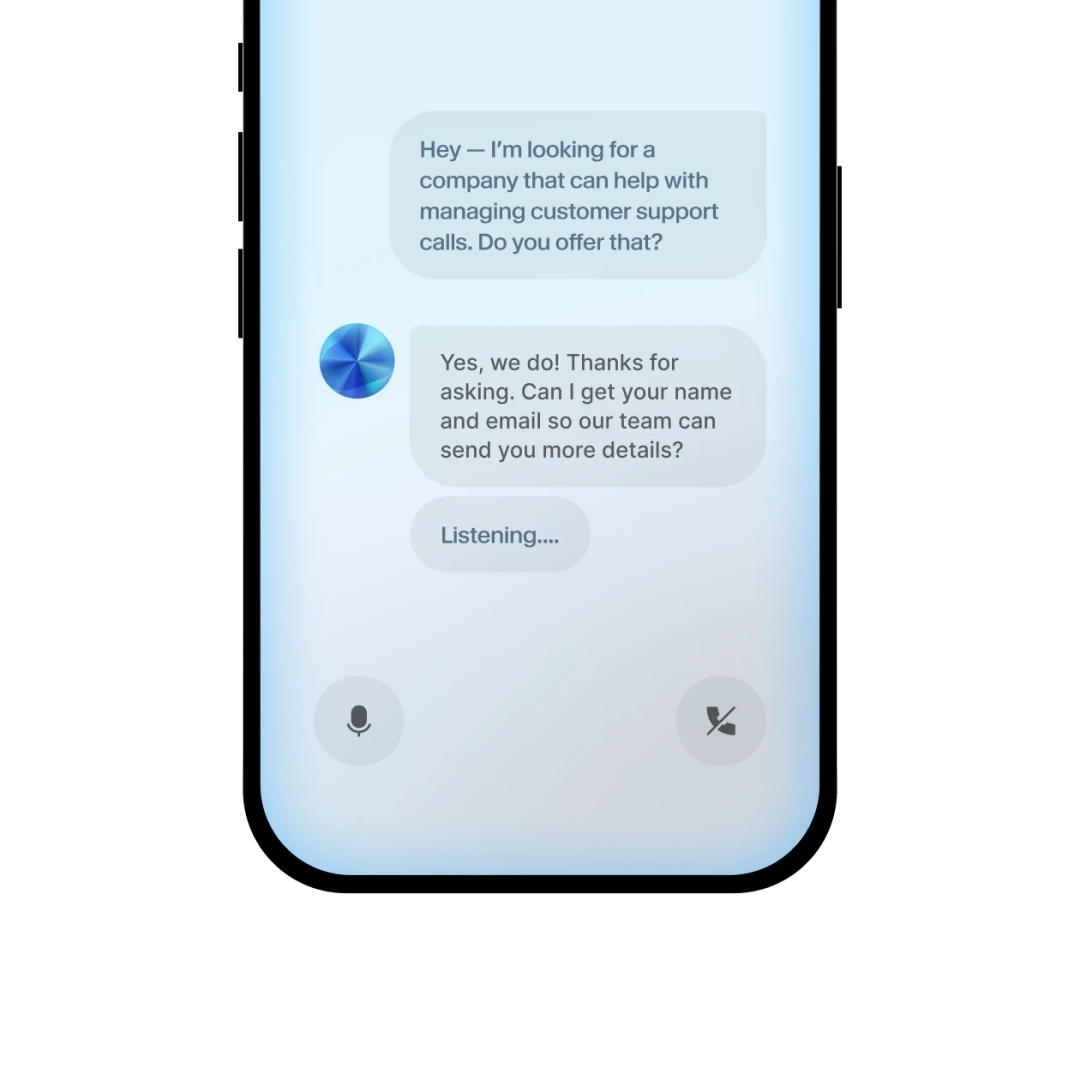

API Integration

Developers can embed speech generation into external systems. This enables use cases such as AI assistants, interactive learning platforms, and voice-enabled applications.

Common Applications

Audiobook and Media Production

Writers and publishers can convert manuscripts into narrated formats more efficiently than traditional recording methods.

Online Education

E-learning platforms often require standardized narration across lessons. AI-generated voices can maintain consistent tone throughout course modules.

Software and Mobile Applications

Apps may use speech synthesis to read notifications, assist users with instructions, or power conversational interfaces.

Gaming and Interactive Media

Game developers can prototype character dialogue quickly using synthetic voices before final voice recording.

Accessibility Solutions

Text-to-speech systems help individuals with visual impairments or reading difficulties access written content.

Advantages

Natural Voice Output

The system aims to reduce robotic sound patterns by incorporating contextual speech modeling.

Scalability

Large volumes of narration can be generated without repeated studio sessions.

Flexible Workflow

Web-based access suits creators, while APIs support developers.

Brand Voice Consistency

Custom voice models allow organizations to maintain a uniform vocal identity.

Limitations and Considerations

Ethical Responsibility

Voice cloning requires clear permission from the original speaker. Misuse can raise legal and ethical concerns.

Subscription and Usage Limits

Most plans are structured around usage tiers or credit systems. High-volume projects may increase costs.

Cloud-Based Dependency

Stable internet connectivity is required since the service operates online.

Fine-Tuning Required

Optimal results may require testing different parameter settings.

Who Should Consider Using ElevenLabs?

- Content creators producing audio narration at scale

- Educational platforms standardizing lesson voiceovers

- Developers integrating speech into applications

- Game studios prototyping dialogue

- Businesses expanding multilingual content

Who Might Prefer Alternatives?

- Users who need fully offline voice generation

- Organizations with strict internal data policies

- Projects requiring exclusively human-performed narration

- Teams with very limited audio output needs

Comparison With Other AI Speech Platforms

Traditional text-to-speech engines often prioritize clarity but may lack emotional variation. Modern AI-based systems attempt to simulate human-like delivery.

ElevenLabs emphasizes expressive realism and voice cloning precision. Other providers may focus more on enterprise automation, analytics, or call center integration. The right choice depends on project goals, integration needs, and compliance considerations.

Final Educational Summary

ElevenLabs provides AI-powered tools for realistic speech generation, multilingual narration, and digital voice cloning. It supports creators and developers who need scalable voice production workflows.

While the technology improves efficiency and accessibility, responsible use is essential—particularly when cloning voices. Organizations should evaluate technical requirements, ethical considerations, and long-term usage costs before adopting any AI voice system.

Disclosure

This article is for educational and informational purposes only. Some links on this website may be affiliate links, but this does not influence our editorial content or evaluations.