Introduction

As artificial intelligence systems become increasingly integrated into digital communication, a persistent limitation has remained: most machines process language without fully interpreting human emotional signals. While natural language processing (NLP) has advanced rapidly, many applications still struggle to detect tone, sentiment shifts, and subtle emotional cues in voice, text, and facial expression.

This challenge has influenced the emergence of a specialized category within artificial intelligence often referred to as affective computing or emotion AI. Tools in this category attempt to interpret emotional signals and incorporate that understanding into machine responses. The broader goal is to improve how digital systems interact with people across customer support, healthcare technology, conversational interfaces, and research environments.

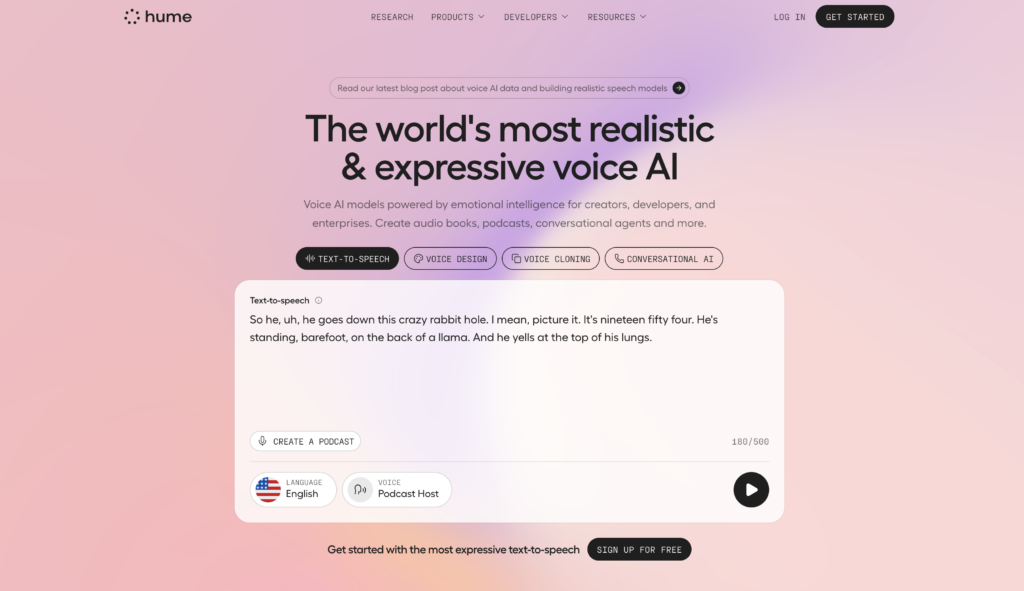

Within this context, Hume AI represents one of the platforms focused on building infrastructure for emotional intelligence in AI systems. Rather than functioning as a consumer-facing chatbot or productivity application, it operates primarily as a developer platform and research-oriented toolkit designed to analyze emotional signals across multiple forms of human expression.

Understanding how platforms like Hume AI operate helps clarify how emotion-aware technologies are evolving and how they may influence the future of human–machine interaction.

What Is Hume AI?

Hume AI is an artificial intelligence platform that focuses on emotion recognition and expression analysis across voice, text, and visual signals. It provides tools, models, and APIs intended to help developers and researchers incorporate emotional context into software applications.

The platform is built around the concept of emotion measurement from multimodal data, meaning it analyzes different forms of input such as:

- Spoken language

- Facial expressions

- Written text

- Vocal tone

- Prosodic patterns in speech

Rather than simply identifying whether a statement is positive or negative, Hume AI attempts to map communication to a broader spectrum of emotional categories. These categories can include states such as amusement, confusion, admiration, distress, or relief, depending on the model configuration.

From a classification perspective, Hume AI fits into several overlapping technology domains:

- Emotion AI platforms

- Affective computing systems

- AI-powered behavioral analysis tools

- Multimodal machine learning platforms

- Developer APIs for emotional signal processing

The system is primarily designed for software developers, research institutions, and organizations building AI-driven applications where emotional context may influence system behavior or analysis.

Key Features Explained

Multimodal Emotion Recognition

One of the defining characteristics of Hume AI is its multimodal approach to emotion detection. Instead of analyzing only one signal type, the platform can interpret emotional indicators from several sources simultaneously.

For example, when analyzing a spoken interaction, the system may evaluate:

- The words being spoken

- Vocal tone and pitch

- Speech rhythm and pauses

- Facial expressions captured through video

Combining multiple signals allows the system to identify emotional patterns that might not be evident when analyzing a single data type.

Voice Emotion Analysis

Voice-based emotion detection is a central capability of Hume AI. The platform evaluates acoustic features of speech, including tone, pitch variation, and pacing.

These signals can reveal emotional cues such as stress, excitement, hesitation, or confidence. Voice emotion analysis is particularly relevant in contexts like call centers, virtual assistants, and speech-driven interfaces.

The analysis does not rely solely on what is said but also on how it is said, which can offer additional insights into speaker sentiment and emotional state.

Facial Expression Interpretation

Hume AI also includes tools for analyzing facial movements and expressions. These models can evaluate visual cues such as:

- Eyebrow movement

- Mouth position

- Eye engagement

- Micro-expressions

The goal is to estimate emotional signals based on patterns of facial movement. Facial analysis is often used in research settings, behavioral studies, and human–computer interaction experiments.

Because facial interpretation can vary across cultures and contexts, many implementations combine visual data with additional inputs to improve reliability.

Text-Based Emotional Analysis

In addition to speech and visual signals, Hume AI processes written language. Its text models go beyond traditional sentiment analysis by attempting to identify more nuanced emotional categories within written communication.

For example, the system may distinguish between:

- Joy and amusement

- Anxiety and fear

- Curiosity and confusion

This approach allows applications to better interpret emotional tone in messages, feedback submissions, or conversational transcripts.

Developer APIs and Integration Tools

Hume AI is structured primarily as a developer platform. Organizations integrate its capabilities through application programming interfaces (APIs) and machine learning tools.

These APIs allow developers to send data such as audio recordings, video frames, or text input to the system for analysis. The platform then returns structured emotional predictions that can be incorporated into software logic.

Possible integration environments include:

- Web applications

- Conversational AI systems

- Research platforms

- Customer service analytics tools

The modular architecture enables developers to use specific models depending on their project requirements.

Common Use Cases

Conversational AI Systems

Emotion-aware conversational agents are one area where platforms like Hume AI are frequently explored. Traditional chatbots often respond without considering emotional tone.

By incorporating emotional analysis, a conversational interface may adapt its responses when a user appears frustrated, confused, or satisfied. This can influence dialogue strategies and escalation logic in automated systems.

Customer Experience Analysis

Organizations often collect large volumes of customer interaction data, particularly in voice-based support environments.

Emotion recognition tools can help analyze recorded conversations to identify patterns such as:

- Customer frustration during support calls

- Positive reactions to specific services

- Stress signals during complex interactions

Such insights may be used in research and operational analysis rather than real-time customer interaction.

Behavioral and Social Science Research

Academic researchers studying communication patterns often require tools that can measure emotional signals at scale.

Hume AI provides automated methods for analyzing emotional expressions in large datasets, which may include interviews, recorded discussions, or multimedia experiments.

These capabilities can support studies in fields such as:

- Psychology

- Sociology

- Human–computer interaction

- Communication research

Healthcare and Wellbeing Applications

Some healthcare technology projects explore emotion recognition as a supplementary signal in mental health monitoring or telehealth environments.

Speech tone analysis, for example, may be examined as one of several indicators of emotional distress or mood shifts. In such contexts, emotion AI tools typically function as research aids or assistive analytics, not diagnostic systems.

Human–Computer Interaction Design

Developers designing interactive technologies sometimes investigate how systems can respond to emotional cues.

Emotion-aware interfaces may adjust system behavior when users appear confused, disengaged, or satisfied. Hume AI provides one of the analytical layers that can support such experimental interaction models.

Potential Advantages

Deeper Emotional Context in AI Systems

Traditional machine learning systems often rely heavily on text or structured data. Emotion AI introduces additional layers of interpretation that consider tone, expression, and behavioral signals.

This can provide richer context when analyzing human communication.

Multimodal Data Interpretation

Many AI platforms focus on a single input type. Hume AI’s multimodal design allows it to combine signals from speech, text, and visuals, which may improve interpretive accuracy in certain applications.

Research-Oriented Model Development

The platform is influenced by work in affective science and emotional psychology. This research foundation can be valuable for institutions studying emotional expression patterns.

Flexible Developer Integration

The availability of APIs allows organizations to integrate emotion analysis into custom systems rather than relying on a predefined interface.

This flexibility supports experimentation across different application environments.

Limitations & Considerations

Complexity of Emotional Interpretation

Human emotions are highly complex and influenced by cultural, contextual, and individual factors. Automated systems may misinterpret signals or overlook contextual subtleties.

Emotion recognition models should therefore be used cautiously and often alongside human judgment.

Ethical and Privacy Concerns

Analyzing emotional signals raises important ethical questions, particularly when facial or vocal data is collected.

Organizations using emotion AI technologies must consider issues related to:

- User consent

- Data storage practices

- transparency about analysis methods

Ethical frameworks and regulatory guidelines continue to evolve in this area.

Cultural Variability

Facial expressions and vocal tones can carry different meanings across cultures. Emotion recognition models trained on limited datasets may not generalize effectively to diverse populations.

This can affect accuracy and fairness in global deployments.

Implementation Complexity

Because Hume AI functions as a developer-focused platform rather than a standalone application, organizations may need technical resources to implement and maintain integrations.

Projects involving multimodal AI systems often require expertise in machine learning, data engineering, and software architecture.

Who Should Consider Hume AI

Hume AI may be relevant for individuals or organizations involved in:

- AI research and development

- Behavioral and emotional analytics

- Academic studies involving communication signals

- Conversational AI experimentation

- Human–computer interaction design

Software teams building systems that attempt to respond to emotional cues may find tools like Hume AI useful as part of their technical stack.

Who May Want to Avoid It

Certain users may find Hume AI less suitable for their needs.

These may include:

- Individuals looking for simple AI chatbot tools

- Small teams without technical development capacity

- projects that do not require emotional analysis

- organizations seeking fully packaged consumer software

Because the platform is designed for developers and researchers, nontechnical users may encounter a steep learning curve.

Comparison With Similar Tools

Several platforms operate in the broader field of emotion recognition and affective computing. While they may differ in model design or deployment method, they share the goal of interpreting emotional signals.

Emotion AI platforms often focus on facial analysis or voice sentiment detection. Some emphasize video-based expression analysis, while others prioritize speech analytics for customer service environments.

Hume AI differs from many single-focus tools by emphasizing multimodal emotional analysis across multiple signal types. This allows developers to combine data sources rather than relying on a single emotional indicator.

However, some competing platforms offer more specialized solutions, such as advanced facial micro-expression tracking or speech-only emotional detection optimized for call center analytics.

In practice, organizations often evaluate multiple systems depending on their specific use case, dataset availability, and technical requirements.

Final Educational Summary

Emotion recognition technologies represent a developing area within artificial intelligence, shaped by ongoing research in psychology, communication science, and machine learning.

Hume AI operates within this emerging field as a platform designed to analyze emotional signals across speech, text, and visual inputs. Its multimodal models aim to detect patterns in human expression that may reveal emotional states or communication intent.

The platform is primarily oriented toward developers and researchers seeking to incorporate emotional awareness into digital systems. Through APIs and analytical tools, Hume AI enables experimentation with emotion-aware interfaces, behavioral research applications, and conversational AI enhancements.

At the same time, the technology operates within a complex ethical and technical landscape. Emotional interpretation by machines remains an evolving challenge, influenced by cultural differences, contextual factors, and privacy considerations.

Understanding platforms like Hume AI therefore requires examining both their technological capabilities and the broader questions surrounding emotion-aware artificial intelligence.

Disclosure: This article is for educational and informational purposes only. Some links on this website may be affiliate links, but this does not influence our editorial content or evaluations.