Introduction

Modern computing workloads increasingly rely on high-performance hardware. Fields such as machine learning, artificial intelligence development, data science, and large-scale simulations require specialized processors capable of handling parallel computations. Graphics Processing Units (GPUs) have become central to these workloads because they can process large datasets and complex algorithms more efficiently than traditional central processing units (CPUs).

However, obtaining and maintaining GPU hardware can be expensive and technically demanding. Organizations and independent developers often face challenges related to hardware procurement, maintenance, scalability, and energy consumption. These limitations have led to the development of cloud-based GPU infrastructure platforms. Such services allow users to access powerful computing resources remotely without managing physical machines.

Within this broader ecosystem of cloud GPU infrastructure, RunPod represents a platform designed to provide on-demand access to GPU-powered computing environments. The platform belongs to a category often described as GPU cloud computing or GPU infrastructure-as-a-service. These services aim to simplify the process of running compute-intensive workloads while reducing the operational complexity associated with physical hardware.

Understanding how platforms like RunPod operate can help clarify how modern developers, researchers, and technology teams approach scalable computing.

What Is RunPod?

RunPod is a cloud-based GPU infrastructure platform that provides remote access to high-performance computing environments. The service allows users to deploy virtual machines equipped with GPUs for tasks that require significant processing power.

In practical terms, RunPod operates as a distributed cloud computing environment where users can allocate GPU resources for machine learning workloads, AI model training, data processing pipelines, and other computational tasks. Rather than purchasing physical GPU servers, users interact with a web-based platform to create and manage GPU instances.

The architecture typically involves containerized environments, virtualization technologies, and remote orchestration tools. These components enable developers to deploy software stacks tailored to their computational requirements. In many cases, platforms like RunPod support frameworks commonly used in artificial intelligence research, including deep learning libraries and data processing tools.

RunPod also fits within the broader ecosystem of developer infrastructure platforms that prioritize flexibility and cost-efficient compute scaling. Instead of committing to long-term hardware investments, users can allocate resources dynamically based on workload demand.

Because GPU-intensive applications are often intermittent—such as during model training cycles or data experimentation phases—cloud GPU platforms provide an alternative that aligns better with variable workloads.

Key Features Explained

On-Demand GPU Instances

One of the central components of RunPod is the ability to create GPU instances when needed. Users can deploy machines equipped with various GPU models depending on the computational requirements of their workloads.

This approach allows developers to select appropriate configurations for tasks such as neural network training, computer vision processing, or natural language processing experiments.

Container-Based Environments

RunPod commonly integrates containerization technologies to simplify application deployment. Containers package software dependencies, runtime environments, and configuration settings into portable units.

For developers working with machine learning frameworks like PyTorch or TensorFlow, containerized environments help ensure compatibility across different systems. Containers also make it easier to reproduce experiments and share environments across teams.

Serverless GPU Options

Some GPU cloud platforms, including RunPod, experiment with serverless computing concepts. In this model, users can run specific workloads without managing persistent servers. Instead, computational jobs execute in short-lived environments triggered by tasks.

Serverless GPU execution can be useful for workloads such as batch inference jobs or data processing tasks where continuous servers are unnecessary.

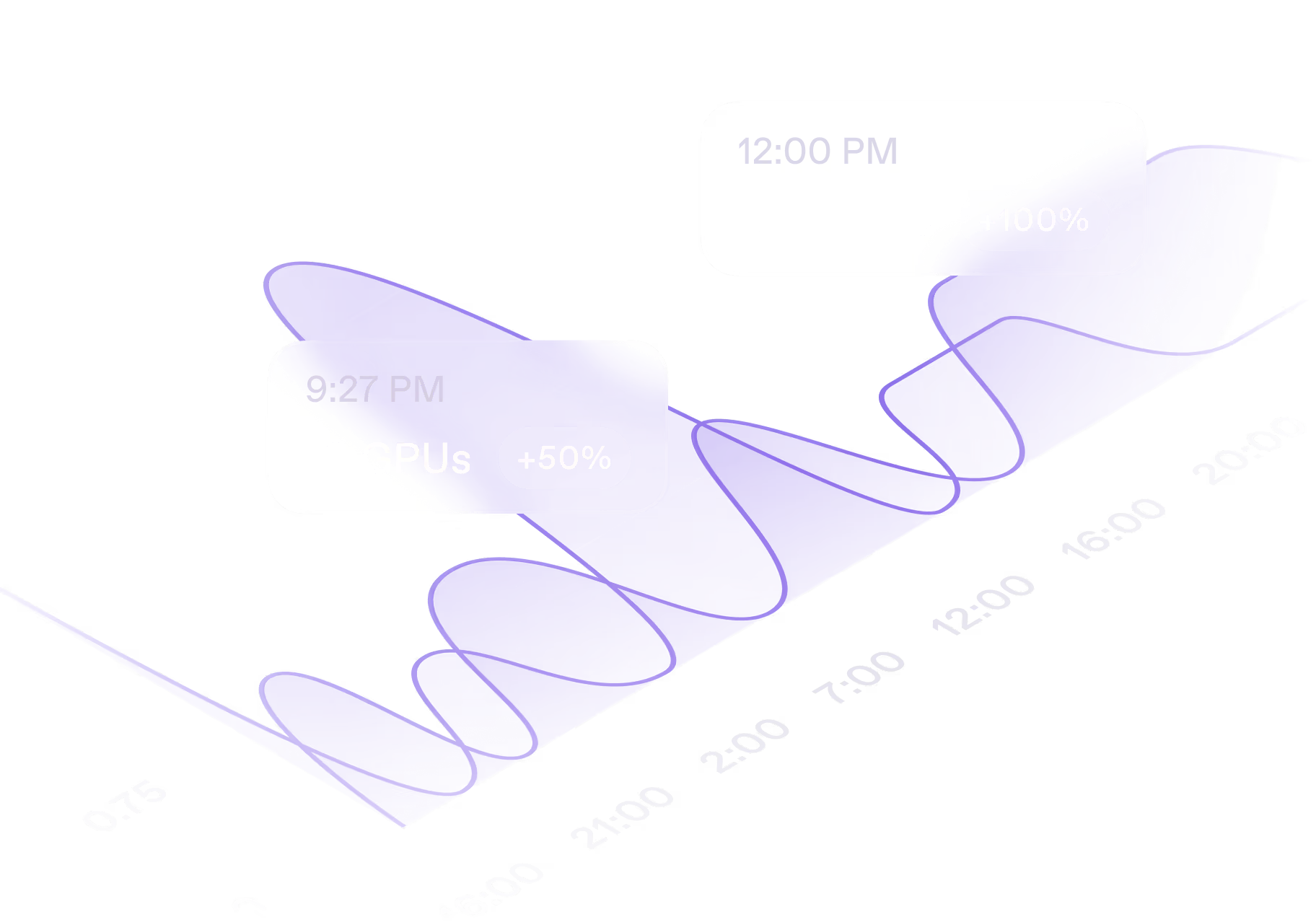

Distributed Compute Architecture

RunPod uses distributed infrastructure across multiple GPU nodes. This architecture enables scaling across several machines when workloads demand higher performance.

For example, training large machine learning models may require parallel processing across multiple GPUs. Distributed environments can reduce training time while enabling larger model architectures.

Customizable Software Environments

Developers often require specific libraries, frameworks, and operating system configurations. RunPod allows users to customize their software stack through container images or configuration settings.

This flexibility supports a wide variety of workloads including:

- AI model training

- Data science experimentation

- video rendering

- scientific simulations

- large-scale data analysis

Persistent Storage and Data Access

GPU workloads frequently involve large datasets. RunPod environments typically support persistent storage systems that allow data to remain accessible across sessions.

Persistent storage ensures that training datasets, experiment outputs, and model checkpoints remain available even after compute instances are shut down.

Common Use Cases

Machine Learning Model Training

Training deep learning models is one of the most common reasons developers use GPU cloud infrastructure. Large neural networks require extensive matrix computations, which GPUs handle efficiently.

Researchers and engineers often train models for tasks such as image classification, speech recognition, and recommendation systems using cloud GPU platforms.

AI Research and Experimentation

Academic researchers and AI practitioners frequently conduct experiments involving different model architectures and datasets. Cloud GPU infrastructure allows them to test hypotheses without purchasing specialized hardware.

RunPod environments can support rapid experimentation cycles where models are trained, evaluated, and modified repeatedly.

Inference and Model Deployment

Once machine learning models are trained, they often need to be deployed for inference. GPU-powered environments can process requests faster, particularly for applications involving computer vision or natural language processing.

Inference workloads may involve analyzing images, generating text responses, or performing predictive analytics.

Data Processing and Analytics

Large datasets require substantial computational resources to process efficiently. GPU infrastructure can accelerate data transformation tasks such as feature extraction, large-scale filtering, and data preparation for machine learning pipelines.

Creative and Rendering Workflows

Although primarily associated with AI workloads, GPU platforms can also support visual computing tasks. These include 3D rendering, video encoding, simulation environments, and graphical modeling.

Potential Advantages

Reduced Hardware Investment

Purchasing high-end GPUs and maintaining server infrastructure can require significant financial investment. Cloud GPU services provide an alternative where resources are accessed temporarily without long-term ownership.

This approach may be particularly relevant for independent developers or small research teams.

Scalable Computing Resources

One advantage of cloud infrastructure is the ability to scale computing resources according to workload demand. Developers can increase GPU capacity during training cycles and reduce usage afterward.

This flexibility allows projects to adapt to varying computational requirements.

Faster Experimentation Cycles

Machine learning research often involves repeated experimentation. Access to high-performance GPUs can shorten training time, allowing researchers to iterate on models more quickly.

Cloud environments also reduce the setup time required to configure hardware.

Geographic Accessibility

Cloud infrastructure platforms make high-performance computing available to users in many regions without requiring access to local data centers or specialized laboratories.

This accessibility has contributed to broader participation in AI development and data science research.

Standardized Development Environments

Containerized computing environments can simplify collaboration between teams. When the same container image is used across different machines, the likelihood of configuration-related issues decreases.

This consistency can help maintain reproducibility in research workflows.

Limitations & Considerations

Cost Variability

Although cloud GPU platforms remove the need for upfront hardware purchases, costs can accumulate over time depending on usage patterns. Continuous workloads may result in higher long-term expenses compared to owned hardware.

Organizations must evaluate usage frequency when determining whether cloud infrastructure is economically appropriate.

Data Transfer Constraints

Large datasets may require significant bandwidth to transfer into cloud environments. In some situations, transferring terabytes of training data can introduce delays or additional costs.

Efficient data management strategies are often necessary when working with cloud infrastructure.

Infrastructure Complexity

Despite efforts to simplify deployment, cloud computing environments still require technical understanding. Users must manage containers, storage systems, networking configurations, and resource allocation.

Developers without prior experience in cloud infrastructure may face an initial learning curve.

Resource Availability

Because cloud GPU resources are shared among users, availability may vary depending on demand. Some GPU models may be temporarily unavailable during periods of high usage.

Planning workloads around resource availability can sometimes be necessary.

Security and Compliance

Organizations working with sensitive data must evaluate the security policies and compliance frameworks associated with cloud infrastructure providers. Data protection practices vary across platforms and regions.

Who Should Consider RunPod

RunPod may be relevant for several groups of technology professionals and researchers:

Machine learning engineers who require GPU resources for model training and experimentation.

AI researchers and academic institutions conducting deep learning research or computational experiments.

Startups building AI-powered products that require flexible GPU infrastructure during development phases.

Data scientists working with large datasets that benefit from parallel computation.

Developers exploring generative AI systems, including large language models and image generation technologies.

These users often benefit from temporary access to GPU clusters without maintaining dedicated hardware.

Who May Want to Avoid It

Despite its flexibility, RunPod may not be necessary for all computing scenarios.

Small software projects that rely primarily on standard CPU processing may not require GPU infrastructure.

Organizations with existing on-premise GPU clusters may prefer to continue using internal hardware if their infrastructure already supports their workloads.

Users without technical experience in cloud environments may find simpler managed platforms more accessible if they do not need full control over infrastructure.

Additionally, teams working with highly regulated datasets may require specialized cloud providers with advanced compliance certifications.

Comparison With Similar Tools

RunPod exists within a competitive landscape of GPU cloud infrastructure providers. Several platforms offer comparable services, though they differ in pricing models, deployment complexity, and infrastructure architecture.

Traditional Cloud Providers

Large cloud providers offer GPU-enabled virtual machines as part of broader infrastructure platforms. These environments often include extensive networking tools, database services, and enterprise-scale management features.

However, these ecosystems may involve more complex configuration processes compared to specialized GPU platforms.

Dedicated AI Infrastructure Platforms

Some services focus specifically on machine learning infrastructure. These platforms provide preconfigured environments for model training and data science workflows.

Compared to general-purpose cloud providers, specialized AI platforms sometimes prioritize developer convenience and faster environment setup.

Decentralized GPU Networks

Another emerging category includes distributed GPU marketplaces where independent hardware providers contribute computing resources to a shared network. These systems aim to create more flexible GPU supply through decentralized infrastructure models.

RunPod shares certain characteristics with this category but typically maintains centralized platform management.

Final Educational Summary

The expansion of artificial intelligence, machine learning, and large-scale data processing has significantly increased demand for high-performance computing infrastructure. GPUs play a critical role in enabling these workloads, but acquiring and maintaining such hardware presents logistical and financial challenges.

RunPod represents one approach to addressing this challenge through cloud-based GPU infrastructure. By providing remote access to powerful computing environments, the platform enables developers and researchers to run computational workloads without maintaining physical GPU servers.

Through features such as containerized environments, distributed computing architecture, and on-demand resource allocation, RunPod supports a range of technical applications including AI model training, data science experimentation, and GPU-accelerated data processing.

At the same time, cloud GPU platforms introduce considerations related to cost management, data transfer, infrastructure complexity, and resource availability. Evaluating these factors is essential when determining whether cloud GPU infrastructure aligns with a project’s technical and operational needs.

As demand for scalable computing continues to grow, platforms like RunPod illustrate how cloud-based infrastructure is evolving to support modern AI development and high-performance computing workloads.

Disclosure: This article is for educational and informational purposes only. Some links on this website may be affiliate links, but this does not influence our editorial content or